Category: Blog

-

What if AI was a professional soccer player?

Reading headlines one will marvel at the abilities of generative AI, but looking at the comparatively limited usage of generative AI in the industry, which is pretty basic, one is left wondering. The key question is if AI is so capable why hasn’t it already taken over everywhere? To understand this, imagine you are the…

-

Can I make a suggestion? Interrogate, Reflect, Choose

Is this situation familiar? you are working on an important project and somebody makes a suggestion that is so crazy that you want to say “Sure, you can do that. You can do a lot of things. You can roll up a wad of 100 dollar bills and put them up your ass and light…

-

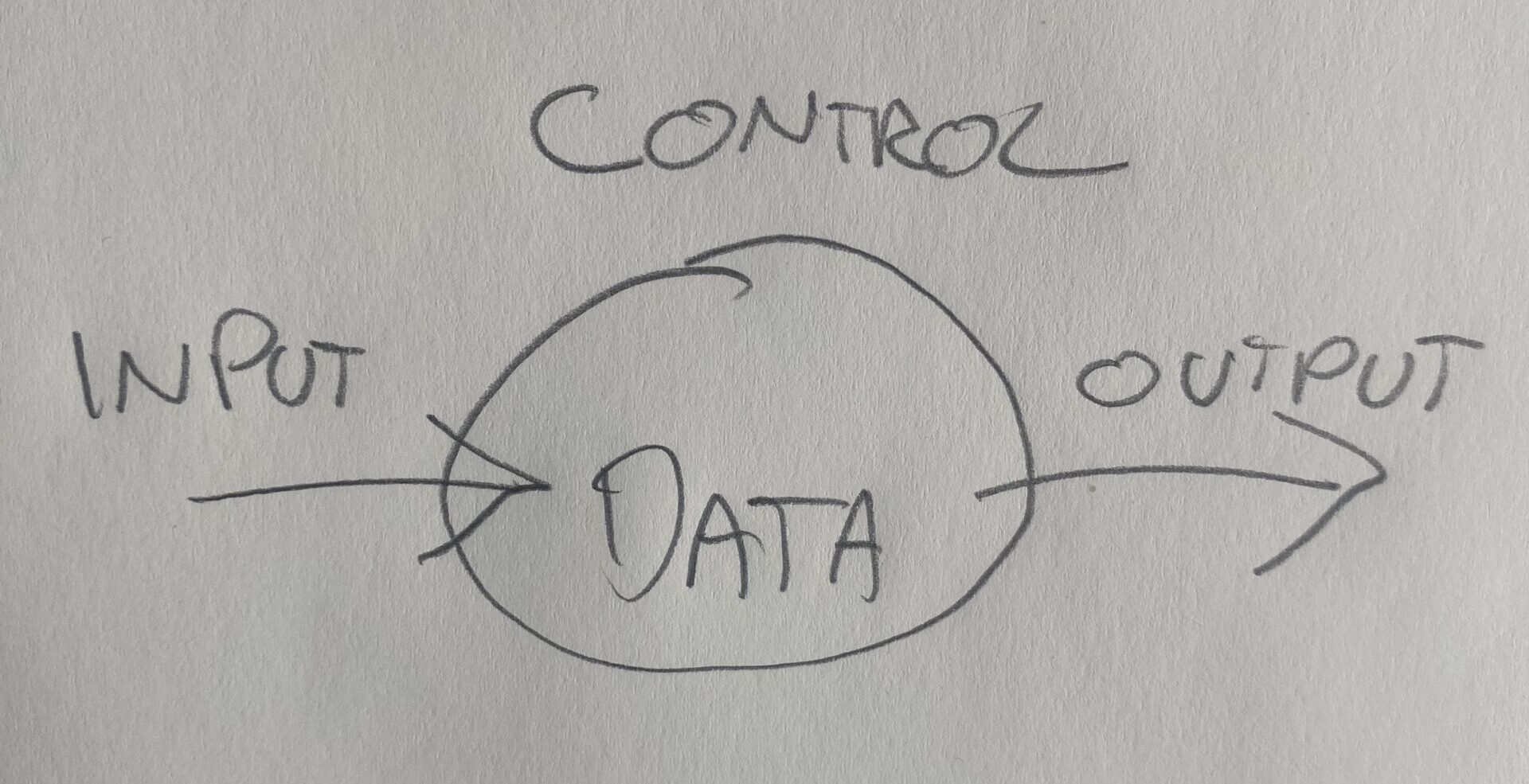

“Just moving data” -how to build the data foundation

When I first started in the IT industry I remember one of my first projects. The purpose was just to move data from one system to another and I remember wondering if that is all there was to it. I discussed this with my mentor who told me that this is what all IT is…

-

Technology is not the problem in AI

After DeepSeek’s language model sent shockwaves through the tech world and the US government announced a $500 billion investment in AI partnership, while the EU Commission is panicing about how to catch up with the US and China’s technological lead, it is appropriate to stop and reflect on whether it is really the technology at…

-

Creating valuable data is a force multiplier

It may come as no surprise that data is important for information technology and that information technology is fundamental to the operations of most organizations who consequently depend on data for their daily operations and need it to fuel new and innovative solutions. Unfortunately, it is often not managed with appropriate diligence resulting in everything…

-

Desperately seeking value – The AI value gap

There is little doubt that AI has the potential to transform the world economy. This has been reiterated in reports from the EU, IMF and major consulting companies and weekly in headlines around the world. As Lars Tvede has pointed out recently the perception is that: “(..)this is the calm before the storm. According to…

-

Is AI the new Mainframe?

No modern technology has added technical debt faster than AI. Technical debt is the future cost of reworking created by a focus on expediency rather than long-term design robustness and the implementation of AI has been expedient. Developers have often been able to pick and choose what frameworks, languages and platforms to use if they…

-

The Three Risk Traps Blocking Innovation

Many valuable innovative solutions get stranded due to the perceived risk of adopting them. Risk should be an important component in all decisions, but it might be counterproductive if it is not put into proper context and perceived adequately. There are especially three risk perception traps that block innovations. Understanding them might help to unlock…

-

Stuck in beta?

Experimentation is good but also deceptively simple. Even though the experiments can be incredibly complex and the brightest minds are involved, from an organizational perspective it is simple: you just select a problem, and then you get a budget and then you solve it. But even though you can feel and taste the solution it…

-

Architecting AI – seven principles to build production grade AI solutions

Artificial Intelligence (Ai) is high on the agenda for many organizations. Many have started to dip their feet into the waters and build AI solutions themselves, which has resulted in a large number of sandboxes, POCs, pilots, hackathons and other experimental efforts. That is positive and a necessary development to begin to harness the qualities…